MLflow is a tool to manage the lifecycle of Machine Learning projects. Is composed by three components:

- Tracking: Records parameters, metrics and artifacts of each run of a model

- Projects: Format for packaging data science projects and its dependencies

- Models: Generic format for packaging ML models and serve them through REST API or others.

ML Tracking using XGBoost

Let’s work on a quick sample to demonstrate the benefits of MLFlow by tracking ML experiment using XGBoost and the Census Income Data Set.

1 | with mlflow.start_run(): |

In this example, we’re using the MLflow Python API to track the experiment parameters, metric (accuracy), artifacts (our plot) and the XGBoost model.

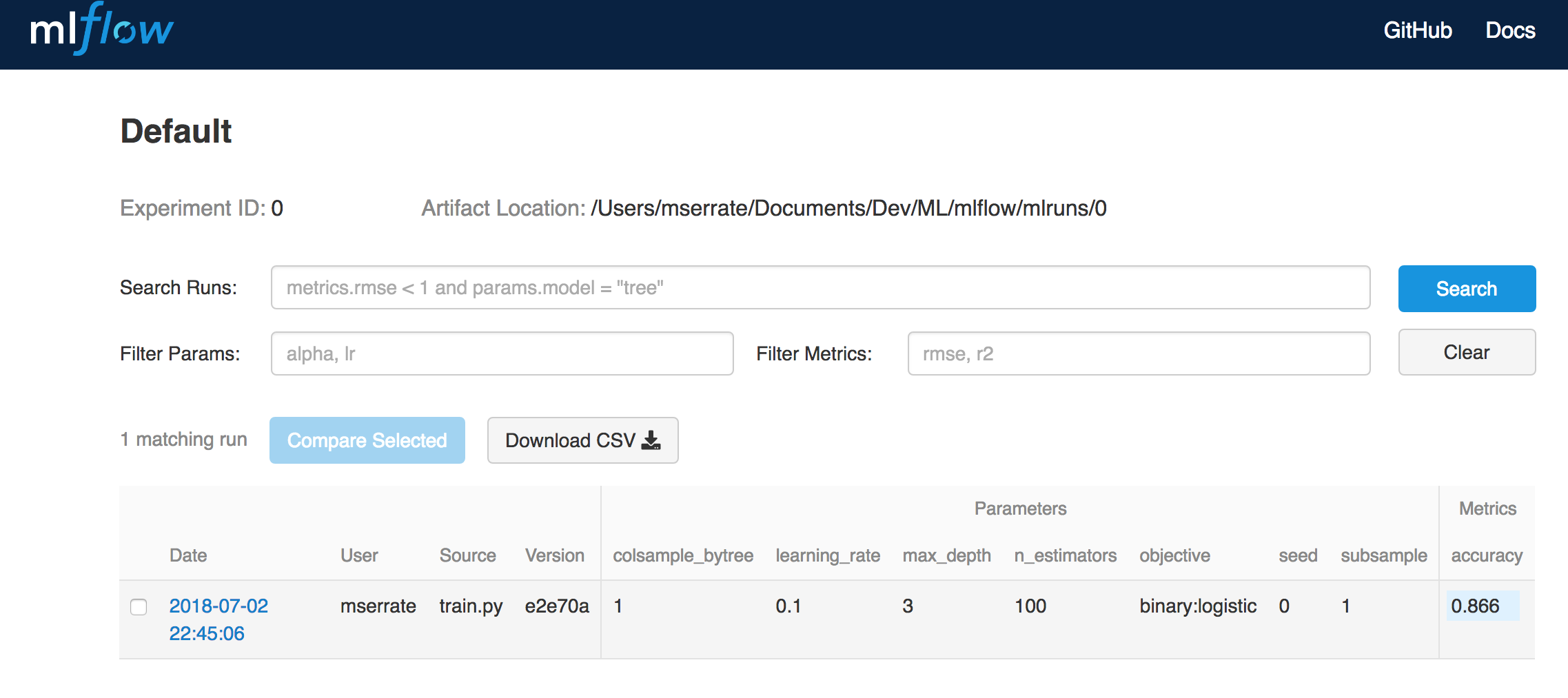

When we run for the first time, we can see in the MLflow UI the following:

With our initial parameters we see that the metric accuracy is: 0.866 (86.6%)

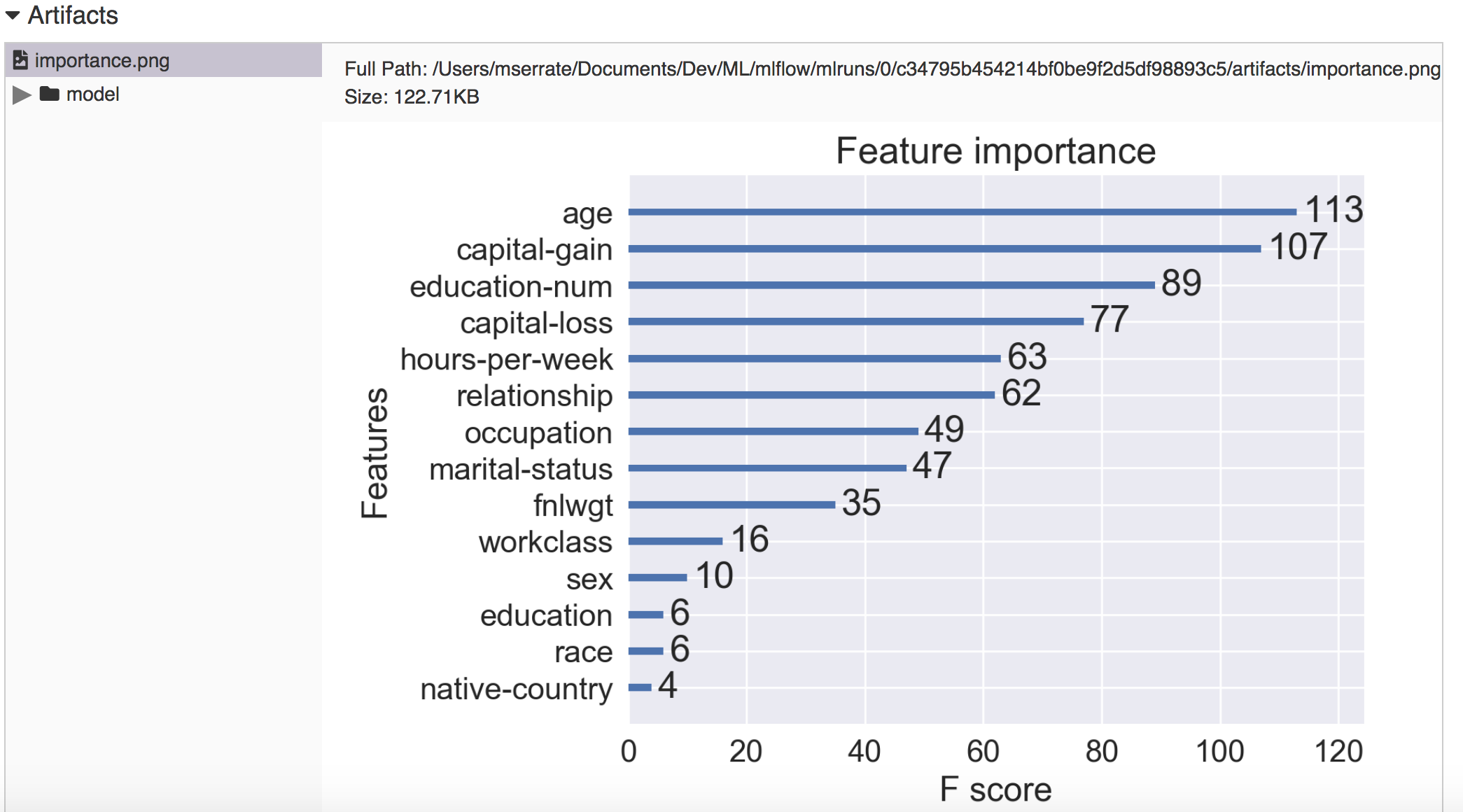

If we select the run and we see our artifact:

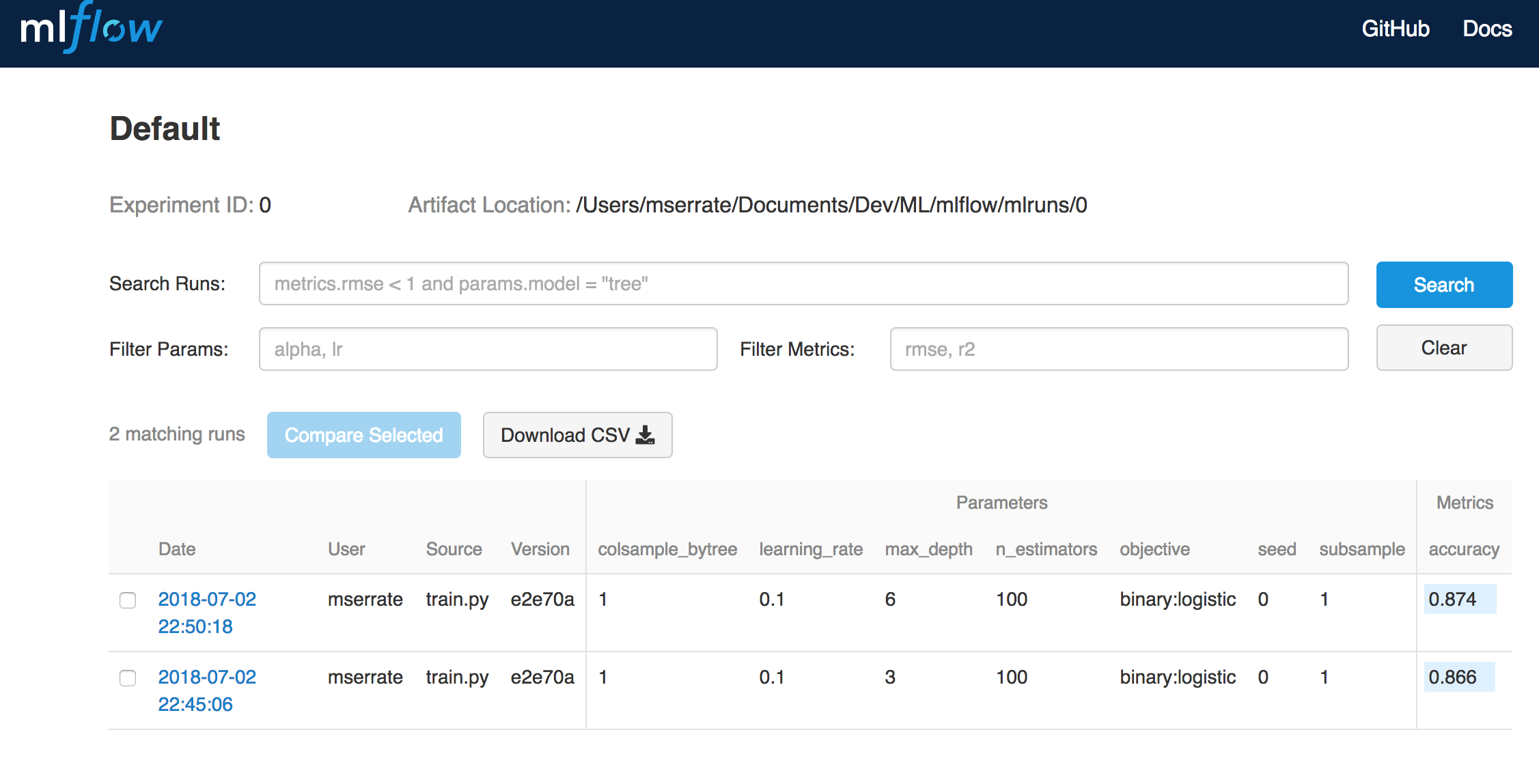

Next, we will change our parameter max_depth to 6 and let’s see what happens:

And we see that our accuract has improved: 0.874 (87.4%)

All the history is tracked, as well as the model itself, so it means we will have all our experiments history tracked and the performance on the model at one moment in time.

You can check the full sample in Github https://github.com/mserrate/mlflow-sample.